KDB2000

A System for Knowledge Discovery in Databases

LACAM

@ Dipartimento di Informatica - Universitŕ degli Studi di Bari - Via Orabona, 4 -70126 Bari

A short description

KDB2000 is a single tool which integrates database access, data preprocessing and transformation techniques, a full range of data mining algorithms as well as pattern validation and visualization. This integration aims to support the user in the entire Knowledge Discovery in Database (KDD) process enabling the user to see the same problem from many different perspectives for a thorough investigation.

In addition to supporting users in all the steps of the KDD process, KDB2000 also assists the user in the choice of some critical parameters in some data mining tasks.

Top of this page

System functionalities

Knowledge discovery is defined as the non-trivial process of identifying valid, novel, potentially useful, and ultimately understandable patterns in data and describing them in a concise and meaningful manner. This process is interactive and iterative, involving numerous steps with many decisions being made by the user. Information flows goes from one stage to the next, as well as backwards to previous stages. The main step is data mining, which involves the selection of the best algorithm for the task in hand, the application of the algorithm to the selected data and the generation of plausible patterns occurring in the data. Although this is clearly important, the other steps of the KDD process are equally critical for successful applications of data mining algorithms to real-world data.

KDB2000 supports users in all steps of the KDD process.

In the data acquisition step, the KDB2000 user can select an existing data source or can create a new data source to access and manage database information. Data of interest for the application (or target data) can be selected by means of an SQL query. The query can be created:

- by using a text editor (for advanced users),

- by using a visual interface, or

- by recalling a previously issued query stored in a repository.

The visual interface allows the user to create complex queries without using SQL statements. It guides the user through the process of building a query, step by step, selecting the tables involved in the query, creating joins with selected tables, choosing the columns, deciding how to summarize the data and how to sort the results, and choosing filters to include or exclude data according to some search criteria. All queries and answers are stored in a user workspace to be used in next analysis.

In data pre-processing step, the KDB2000 user may use both statistical methods and data visualization techniques in basic operations such as removing outliers, if appropriate, collecting the necessary information to model the noise or deciding on strategies for handling missing data. Statistical methods are also a useful way to understand the data content better. The tool computes some basic statistics for each attribute, such as mean, mode, median, maximum, minimum, correlation coefficient and standard deviation. When combined, these measures provide a means for determining the presence of invalid and skewed data. Data visualization techniques are used to help the user understand and/or interpret data. Histograms and pie charts are also available in the system. Visualization techniques help to identify distribution skews and invalid or missing values.

Data transformation step aims at transforming selected data in analytical data model that is needed for use in mining techniques. The analytical data model represents a consolidated, integrated and time dependent restructuring of the data which have been selected and pre-processed. KDB2000 provides the following data transformation tools:

- discretization: converts quantitative variables into categorical ones by dividing the values of the input variables into intervals. Intervals are regular or preserve a uniform distribution of data.

- scaling: most data mining algorithms can accept only numeric input in the range [0.0,1.0] or [-1.0,1.0]. In these cases, continuous variable values must be scaled.

- null value substitution: substitutes null values of continuous and discrete variables with mean and mode, respectively

- sampling: extracts a random set of items from the specified dataset. KDB2000 does the sampling using a reservoir algorithm (algorithm Z), which selects a random sample of n objects without replacing them from a pool of N objects, where the value of N is previously unknown. The algorithm Z outperforms current sampling methods in the sense that it extracts a random sample of a set of objects in one pass, using constant space and in

O(n(1 + log(N/n))) expected time, which is optimum.

- binarization: converts a discrete variable into a numeric representation using a set of attributes, one attribute for each possible nominal value. This transformation is especially useful for association rule mining, where binary representations of transactions are required for typical basket analysis.

The data mining step is central in the KDD process and uses the output of the previous steps. KDB2000 provides several algorithms for the following data mining tasks: classification, regression, clustering and association rule discovery.

- Classification means learning a function that classifies a data item into one of several predefined classes.

KDB2000 supports classification by means of a k-nearest neighbour (K-NN) algorithm or a decision tree induction algorithm.

- Regression is a learning function that maps a data item into a real-valued prediction variable.

KDB2000 supports regression by means of an innovative model tree induction algorithm named SMOTI

(Stepwise MOdel Tree Induction).

- Clustering is a descriptive task where one seeks to identify a finite set of categories or clusters to

describe the data. Similar data items can be seen to be generated from the same component with a mixture of probability

distribution. The algorithm available in KDB2000 performs k-means clustering in one scan of the dataset.

- Association rules discover a model that describes relations between attributes.

These relations are rules in the form "X --> Y", where X and Y are a set of binary literals called items and

the intersection between X and Y is empty.

Since the available association rule mining algorithms are devoted to binary data tuples,

it is necessary for discrete variables to be transformed into a binary representation.

In KDB2000 this is performed automatically by means of the binarization function, already implemented for

the data transformation facilities.

Finally, KDB2000 supports the user in the evaluation of the predictive accuracy of decision and model trees,

by means of the k-fold Cross-Validation (k-CV) approach.

In the k-CV approach, each dataset is divided into k blocks of near-equal size and near-equal distribution of class values,

and then, for every block, the (decision or regression) method is trained on the remaining blocks and tested on the hold-out

block. The estimate of the accuracy of the decision or model tree, built on the whole set of training cases,

is obtained by averaging the accuracy computed for each hold-out block

Top of this page

System Architecture

|

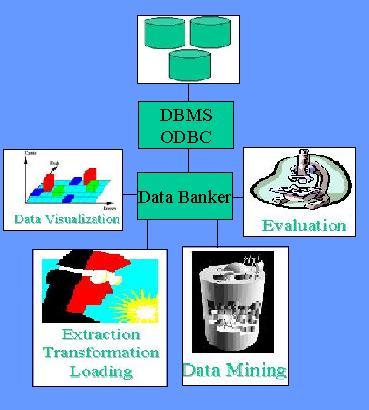

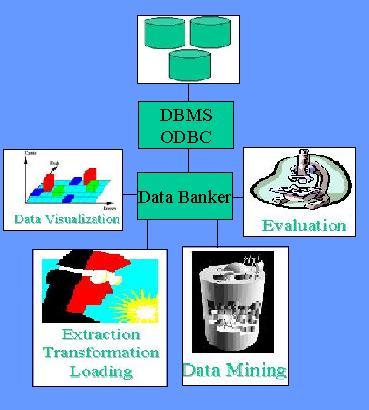

The general architecture of KDB2000, shown in Figure integrates a relational database management system,

such as Microsoft Access, with a set of Data Mining modules, with the Extraction,

Transformation and Loading (ETL) tool, as well as with the Visualization and Evaluation tools.

The Data Banker component allows access to data by some external relational data sources using the ODBC driver.

It connects to a specified database, executes an SQL query, and returns the

result set to the system. This component also allows the requesting system

to obtain metadata on table names, table columns, column data types and so on. This information can be used as

part of the data processing. Therefore, Data Banker extracts data from the data source and orchestrates the moving of it from one KDB2000 component to another. It also includes a storage subsystem that holds the user data both in a database and in a file.

Data Visualization tools are used to display data selected by the user and patterns discovered by the mining algorithms.

The Extraction, Transformation and Loading tool operates at the heart of the KDD process by extracting data from databases and transforming the data to make them suitable for subsequent analyses.

The Data Mining module of KDB2000 includes powerful algorithms to discover patterns in selected data according to the user task (classification, regression, clustering or association rule discovery).

Finally KDB2000 architecture includesan Evaluation module that collects results of several runs of some predictive data mining methods and computes average statistics.

|

Top of this page

Project team

Project Leader

Prof.

Donato Malerba

Current Staff

Annalisa Appice, Michelangelo Ceci

Top of this page

Download

KDB2000 runs under Microsoft Windows 98. To install the system you may need to download and install the MSagent Merlin

http://agent.microsoft.com/agent2/chars/merlin.exe

Top of this page

Publications

D. Malerba, F. Esposito, M. Ceci, A. Appice (2004).

Top-Down Induction of Model Trees with Regression and Splitting Nodes,

IEEE Transactions on Pattern Analysis and Machine Intelligence, 26, 5, 612-625.

A. Appice, M. Ceci., & D. Malerba (2002).

KDB2000: An integrated knowledge discovery tool.

In A. Zanasi, C. A. Brebbia, N.F.F. Ebecken, P. Melli (Eds.) Data Mining III, Series Management Information Systems, Vol 6, 531-540, WIT Press, Southampton, UK

D. Malerba, A. Appice, M. Ceci & M. Monopoli (2002).

Trading-off local versus global effects of regression nodes in model trees.

In H.-S. Hacid, Z.W. Ras, D.A. Zighed & Y. Kodratoff (Eds.),

Foundations of Intelligent Systems, 13 International Symposium, ISMIS'2002,

Lecture Notes in Artificial Intelligence, 2366,393-402,

Springer, Berlin, Germany.

D. Malerba, A. Appice, A. Bellino, M. Ceci, & D. Pallotta (2001).

Stepwise Induction of Model Trees.

In F. Esposito (Ed.), AI*IA 2001: Advances in Artificial Intelligence, Lecture Notes in Artificial Intelligence, 2175, Springer, Berlin, Germany.

D. Malerba, A. Appice, A. Bellino, M. Ceci, & D. Pallotta (2001).

KDB2000: Uno strumento per la scoperta di conoscenza nelle Basi di Dati.

In F. Esposito (Ed.), AI*IA 2001: Advances in Artificial Intelligence, Lecture Notes in Artificial Intelligence, 2175, Springer, Berlin, Germany.

Esposito, F., Malerba, D., Tamma, V. (1998).

Efficient Data-Driven Construction of Model-trees.

Proceedings of NTTS'98, Int. Seminar on New Techniques and Tecnologies for Statistics, pp. 163-168.

Esposito, F, Malerba, D, Semeraro, G, (1997) .

A Comparative Analysis of Methods for Pruning Decision Trees.

IEEE Transactions on Pattern Analysis and Machine Intelligence. PAMI-19, 5, 476-491.

Top of this page